Dive Brief:

- The 1996 law that protects social media companies and other internet platforms from liability for third-party content goes on trial at the U.S. Supreme Court this week.

- In Gonzales vs. Google, the internet company is defending itself from claims by the family of a terrorist attack victim that its algorithm for recommending videos on YouTube, among other things, isn’t the kind of services that are protected by Sec. 230 immunity.

- In Twitter vs. Taamneh, the company seeks to overturn an appeals court decision that, by not doing enough to keep certain content off its site, it aided and abetted terrorists who killed dozens of people, including a member of the family that sued the company.

Dive Insight:

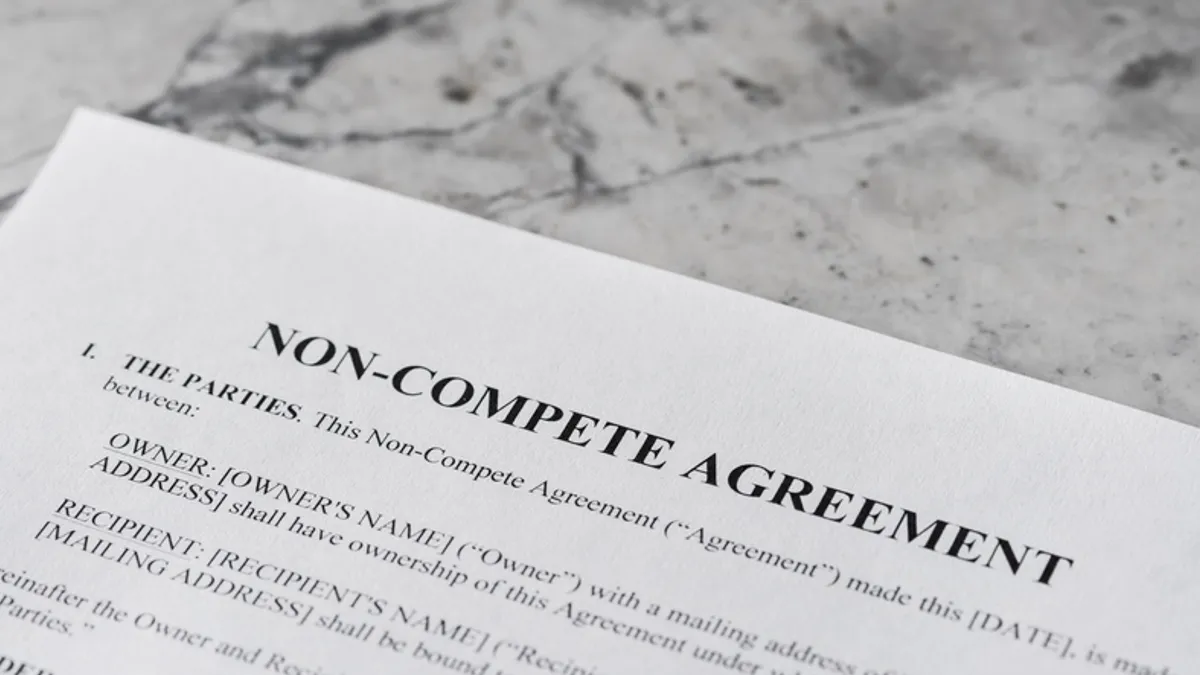

Section 230 of the Communications Decency Act was enacted in the early years of the public-facing web to help the internet grow into a platform on which different points of view could be shared as a way to encourage the widespread dissemination of information.

The Gonzalez case doesn’t go directly after Google’s liability protection for third-party content it hosts on YouTube, which it owns; rather, it goes after YouTube’s algorithm for suggesting which videos users might want to watch, among other things. Those suggestions, as well as links to other content, are entirely the company’s own and not the kind of third-party content protected under the law.

“YouTube selected the users to whom it would recommend ISIS videos based on what YouTube knew about each of the millions of YouTube viewers, targeting users whose characteristics suggested they would be interested in ISIS videos,” attorneys for the Gonzalez family said.

Google says Sec. 230 doesn’t just protect internet companies from liability for third-party content; it protects the companies from being treated as publishers, which, unlike internet companies, make editorial decisions about the content they publish.

“If plaintiffs could evade Section 230 … by targeting how websites sort content or trying to hold users liable for liking or sharing articles, the internet would devolve into a disorganized mess and a litigation minefield,” counsel for Google said.

In the Taamneh case, the focus is on the Antiterrorism Act, but based on what the court decides, there would be ramifications for Sec. 230.

The 9th Circuit Court of Appeals held that Twitter, along with Google and Facebook, aided and abetted terrorism, separate from Sec. 230 protection, by not doing enough to keep content that they knew helped terrorism off their site.

“Defendants’ assistance to ISIS was substantial,” plaintiffs adequately argued, the appeals court said in its majority opinion. “Defendants provided services that were central to ISIS’s growth and expansion, and that this assistance was provided over many years.”

Twitter says it works hard to keep dangerous content off its site and that it would be held to an impossible standard if it’s found liable for what terrorist groups do. The Antiterrorism Act is meant for parties that assist in a specific act of terrorism.

“Plaintiffs acknowledge that defendants regularly removed terrorist content from their platforms, and a plaintiff can always allege that a defendant could have done more,” attorneys for Twitter said. “Congress did not enact a statute that attaches liability based on such an ill-defined and capacious theory.”

The standard Twitter favors, the Taamneh family argued, is so narrow that no internet platform could ever be held liable under the law.

The Antiterrorism Act, “thus construed, would generally apply only in circumstances that would be meaningless, such as to a fellow terrorist who handed a killer a firearm,” the attorneys said.

Both cases are being heard this week by the top court: the Gonzalez case on Tuesday and the Twitter case on Wednesday.

How the court rules on one or both could mark a sea change in the relationship between internet companies and the content they serve up and how they direct people to it by upping their liability exposure.